- Home

- Services

- About

- News

- Contact

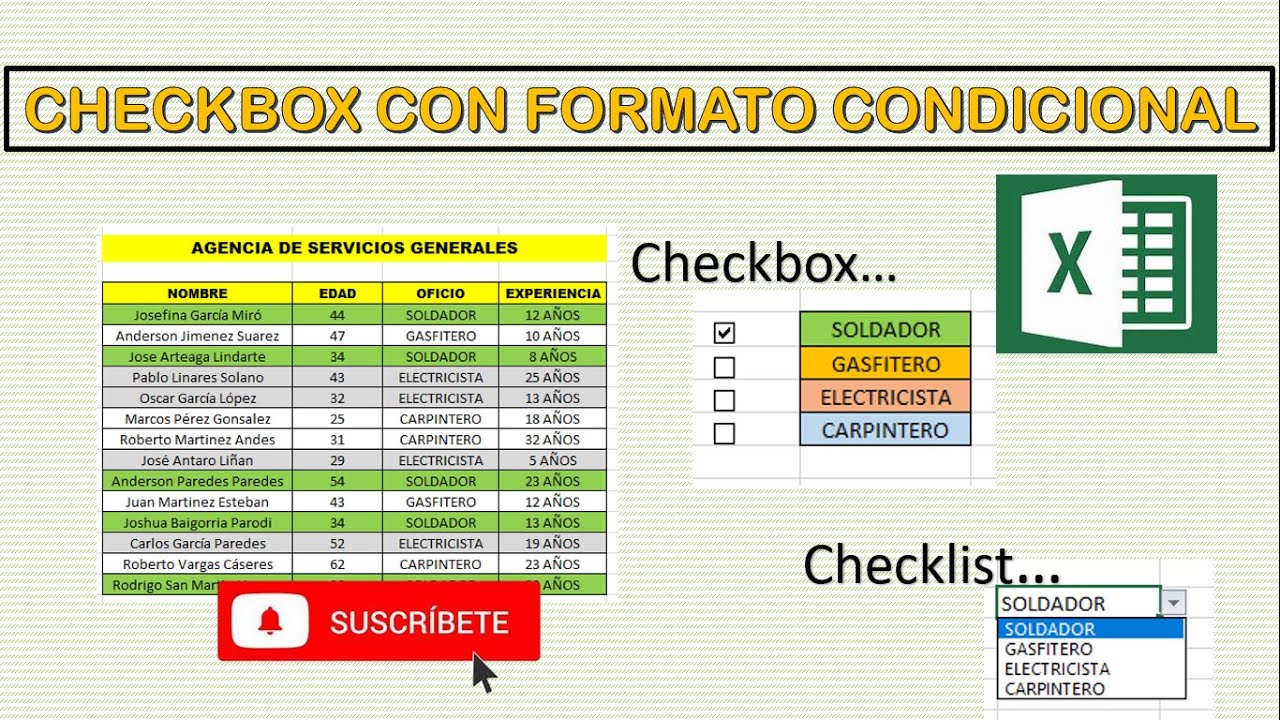

- Como hacer checkbox en excel para mac

- Download futari wa pretty cure mp4 sub indo

- License key detroit become human pc

- Fortnite for ps3 download

- Jai radha madhav jagjit singh mp3 song download

- Microkingdom controller driver

- Insult order english patch uncensored

- Immortals of meluha epub file download

- How to download chrome on windows 11

- Hitfilm pro countryboy

- Delphi ds150e software download deutsch

- Old version xampp control panel v3-2-1 download free

- 5e character builder steps

- Svd facebook download

- Quickbooks 2013 premier download

- How do u get aimbot in fortnite

If you click the command number for a cell, it updates your URL to be anchored to that command. If you enable line or command numbers, Databricks saves your preference and shows them in all of your other notebooks for that browser.Ĭommand numbers above cells link to that specific command. For line numbers, you can also use the keyboard shortcut Control+L.

To show or hide line numbers or command numbers, select Line numbers or Command numbers from the View menu. Use the View menu to select a display option.

#Como hacer checkbox en excel para mac code

#Como hacer checkbox en excel para mac how to

For more information, see How to work with files on Azure Databricks. For example, to run the dbutils.fs.ls command to list files, you can specify %fs ls instead. %fs: Allows you to use dbutils filesystem commands.To run a shell command on all nodes, use an init script. This command runs only on the Apache Spark driver, and not the workers. To fail the cell if the shell command has a non-zero exit status, add the -e option.

%sh: Allows you to run shell code in your notebook.Notebooks also support a few auxiliary magic commands: REPLs can share state only through external resources such as files in DBFS or objects in object storage. Variables defined in one language (and hence in the REPL for that language) are not available in the REPL of another language. When you invoke a language magic command, the command is dispatched to the REPL in the execution context for the notebook. The notebook toolbar includes menus and icons that you can use to manage and edit the notebook. You can run each cell individually or run the whole notebook at once. Markdown cells contain markdown code that renders into text and graphics when the cell is executed. Notebooks use two types of cells: code cells and markdown cells. Click your username at the top right of the workspace and select User Settings from the drop down.ipynb format, and build and share dashboards. You can schedule notebooks to automatically run workflows. Azure Databricks notebooks also provide built-in data visualizations and automatic versioning.

You can work in Azure Databricks notebooks using Python, SQL, Scala, and R and customize your environment with the libraries of your choice. Azure Databricks notebooks provide real-time coauthoring and automated versioning. You use Azure Databricks notebooks to develop data science and machine learning workflows and to collaborate with colleagues across engineering, data science, machine learning, and BI teams.

- Home

- Services

- About

- News

- Contact

- Como hacer checkbox en excel para mac

- Download futari wa pretty cure mp4 sub indo

- License key detroit become human pc

- Fortnite for ps3 download

- Jai radha madhav jagjit singh mp3 song download

- Microkingdom controller driver

- Insult order english patch uncensored

- Immortals of meluha epub file download

- How to download chrome on windows 11

- Hitfilm pro countryboy

- Delphi ds150e software download deutsch

- Old version xampp control panel v3-2-1 download free

- 5e character builder steps

- Svd facebook download

- Quickbooks 2013 premier download

- How do u get aimbot in fortnite